File size: 3,729 Bytes

d10592a 7b55ade 60ea257 1d57572 d10592a 7b55ade 60ea257 1d57572 6a063ff b21d783 6a063ff 4b05339 6a063ff 1d57572 6a063ff b21d783 6a063ff 1d57572 6a063ff |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 |

---

license: apache-2.0

language:

- en

tags:

- knowledge

- Retrieval

- Reasoning

- Common Crawl

- MATH

size_categories:

- 100B<n<1T

---

Knowledge Pile is a knowledge-related data leveraging [Query of CC](https://arxiv.org/abs/2401.14624).

This dataset is a partial of Knowledge Pile(about 40GB disk size), full datasets have been released in [\[🤗 knowledge_pile_full\]](https://huggingface.co/datasets/Query-of-CC/knowledge_pile_full/), a total of 735GB disk size and 188B tokens (using Llama2 tokenizer).

## *Query of CC*

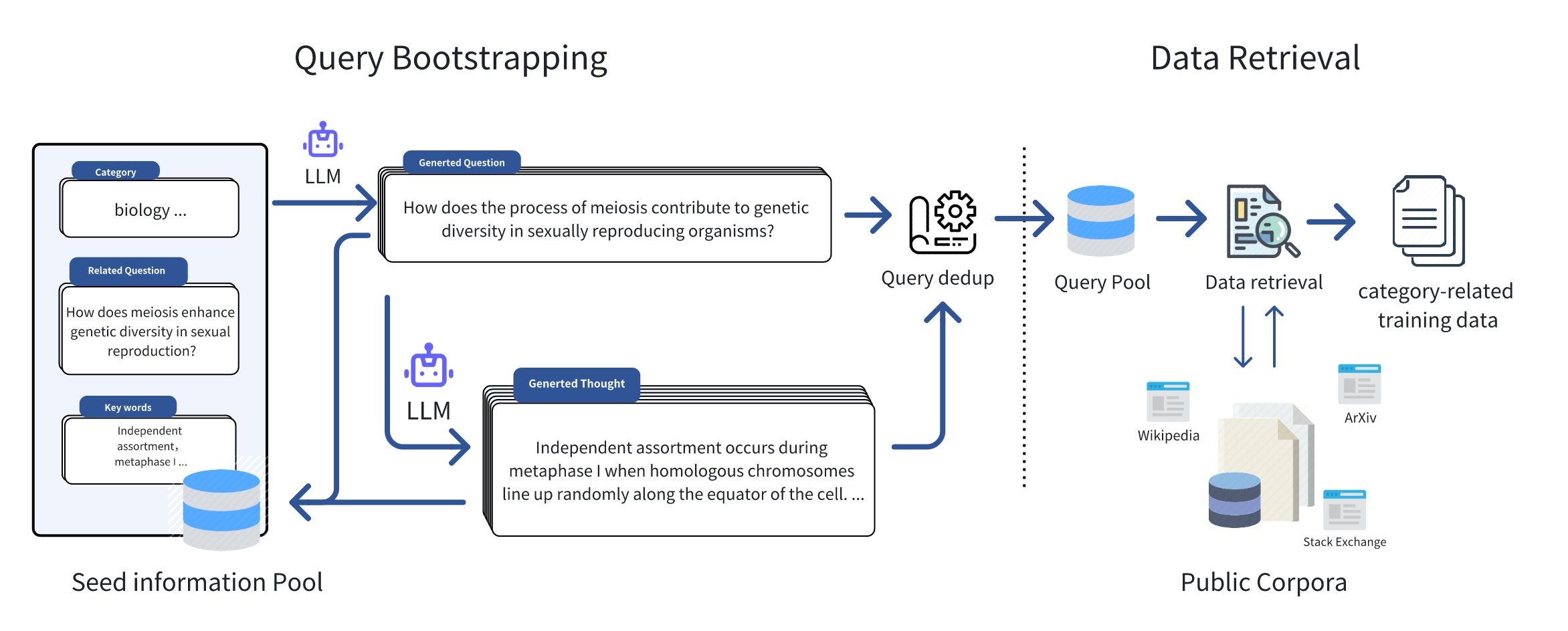

Just like the figure below, we initially collected seed information in some specific domains, such as keywords, frequently asked questions, and textbooks, to serve as inputs for the Query Bootstrapping stage. Leveraging the great generalization capability of large language models, we can effortlessly expand the initial seed information and extend it to an amount of domain-relevant queries. Inspiration from Self-instruct and WizardLM, we encompassed two stages of expansion, namely **Question Extension** and **Thought Generation**, which respectively extend the queries in terms of breadth and depth, for retrieving the domain-related data with a broader scope and deeper thought. Subsequently, based on the queries, we retrieved relevant documents from public corpora, and after performing operations such as duplicate data removal and filtering, we formed the final training dataset.

## **Knowledge Pile** Statistics

Based on *Query of CC* , we have formed a high-quality knowledge dataset **Knowledge Pile**. As shown in Figure below, comparing with other datasets in academic and mathematical reasoning domains, we have acquired a large-scale, high-quality knowledge dataset at a lower cost, without the need for manual intervention. Through automated query bootstrapping, we efficiently capture the information about the seed query. **Knowledge Pile** not only covers mathematical reasoning data but also encompasses rich knowledge-oriented corpora spanning various fields such as biology, physics, etc., enhancing its comprehensive research and application potential.

<img src="https://github.com/ngc7292/query_of_cc/blob/master/images/query_of_cc_timestamp_prop.png?raw=true" width="300px" style="center"/>

This table presents the top 10 web domains with the highest proportion of **Knowledge Pile**, primarily including academic websites, high-quality forums, and some knowledge domain sites. Table provides a breakdown of the data sources' timestamps in **Knowledge Pile**, with statistics conducted on an annual basis. It is evident that a significant portion of **Knowledge Pile** is sourced from recent years, with a decreasing proportion for earlier timestamps. This trend can be attributed to the exponential growth of internet data and the inherent timeliness introduced by the **Knowledge Pile**.

| **Web Domain** | **Count** |

|----------------------------|----------------|

|en.wikipedia.org | 398833 |

|www.semanticscholar.org | 141268 |

|slideplayer.com | 108177 |

|www.ncbi.nlm.nih.gov | 97009 |

|link.springer.com | 85357 |

|www.ipl.org | 84084 |

|pubmed.ncbi.nlm.nih.gov | 68934 |

|www.reference.com | 61658 |

|www.bartleby.com | 60097 |

|quizlet.com | 56752 |

### cite

```

@article{fei2024query,

title={Query of CC: Unearthing Large Scale Domain-Specific Knowledge from Public Corpora},

author={Fei, Zhaoye and Shao, Yunfan and Li, Linyang and Zeng, Zhiyuan and Yan, Hang and Qiu, Xipeng and Lin, Dahua},

journal={arXiv preprint arXiv:2401.14624},

year={2024}

}

``` |